As digital products aim for users across continents, performance standards increase exponentially. Audiences become less forgiving of experiences that take time to load no matter where they are located or on which device so for organizations operating in a globalized framework, latency becomes an overwhelming technical challenge that needs to be addressed. Content APIs are at the center of this issue, as they connect the content repositories with the front-end applications used by audiences.

However, creating a content API that performs low-latency operations is not simply a matter of having high-performance servers; it’s an architecturally driven solution informed by geographic considerations, traffic patterns, content organization, and future scaling. Creating a low-latency content API provides all users with a cohesive experience everywhere and lays the foundation for new digital realities that are distributed and international.

Factors that Impact Latency in a Global Environment

Latency is the time from request to response and in a global context, geography is at play. If a client on one continent requests content from an origin server on another continent, it stands to reason that latency will occur if all requests are funneled there. Storyblok and Nuxt are often paired to address this challenge, combining efficient API-driven content delivery with optimized frontend rendering strategies. How headless CMS transforms digital content strategy becomes particularly clear in these performance considerations, where decoupled delivery allows teams to optimize how and where content is served. However, distance is only part of the consideration. Latency is also affected by payload size, the processing overhead per device and per network, network traffic, and the manner in which content can be found and compiled for rendering.

When designing for low-latency content APIs, it is crucial that teams understand these factors. Teams need to acknowledge when they’re implementing something that will inherently introduce latency, and when they need to actively address latency within their control. It’s not helpful to fixate on one area of latency if it’s fixed at the request and another onset at the response, the API will be suboptimal. Only through a global assessment can companies create stable APIs for all clients, regardless of their distance to home base. Such foundational knowledge drives every subsequent design decision and makes any latency reduction a global reality.

How Content Structure and Definition Impact API Performance

APIs’ performance is impacted by how content is defined. If an API returns large, unstructured payloads, clients are forced to download far more information than necessary, and the API response time takes longer than ideal; the payload is incredibly resource-intensive. Low-latency content APIs are developed around structured, granular content that can be queried for context-specific and need-specific details.

With clearly defined fields and content components, clients can easily retrieve only what is necessary for a given experience. The more efficiently this can happen, the lower the payload and the time required for compilation and transmission, which is especially important for users with slower connections. It also makes caching more favourable; smaller, reusable caches make more sense than a single large cache of everything, since they can exist independently without incurring compile-time overhead or requiring additions. An API developed with content structure in mind allows for performance gains to have contextual validity at scale of content inventory and use.

Global Distribution, Edge Delivery Proximity

The best way to create low latency for an international audience is to get content closer to the user. Low latency content APIs are designed for global distribution infrastructures so that responses can be given as close to the end user as possible. By avoiding extensive round trip time and establishing consistency among edges, anticipated engagement at low latency can be achieved.

Wherever possible, APIs should be stateless and predictable to facilitate edge distribution. When content responses can be cached and served, load times benefit any user regardless of their geographic location. Thus, the goal when designing such APIs from both the API owner’s perspective and API consumer’s perspective is to avoid cache busting through personalization at the API level. If this dynamic logic can be separated from pure content-delivery caching, proximity-based response becomes a feasible option, rather than worrying about latency as a side effect of location.

Query Patterns that Encourage Low Latency at Scale

Similarly, poorly designed query patterns result in complexity that finds APIs striving for low latency at scale. In addition to predictable access points and predictable content to respond to relationships, low-latency APIs across the globe must encourage quick responses under high load.

This means certain nestings must be avoided where applicable in order for users to quickly get to where they need to go, especially when response contents involve relationships between content entities (ex. collections versus items/subtitles). The way users can query information should be streamlined, rather than creating inquiry chaos that could slow things down. Improving query patterns over time does facilitate speed at first glance; however, it also benefits stability since systems cannot take on traffic spikes without devastating user experiences unless systems are already adapted for long-term growth through thoughtful interaction.

Caching Strategies Are Built-In API Characteristics

One of the most influential latency-cutting strategies is caching, which can only be implemented from the outset of API design, not as an afterthought. Low-latency content APIs operate on the assumption that caching will happen at multiple layers, client-level, edge, and intermediary caches.

Where predicted, cacheable responses will be generated, cache headers, and consistent endpoints round out expected caching behavior. If an API can provide the same answer to the same question multiple times without other changes, using cached responses will save time, effort, and performance. This requests that low-latency content APIs intentionally structure their responses and caching flags to ensure the prevention of overwork on an origin system and best-case responses in a timely manner.

Using a low-latency content API means that the more requests for certain types of information (especially in a global setting), the better performance becomes. Instead of a multinational network needing to request real-time solutions from a centralized server, it instead becomes a localized retrieval issue with distributed caches and reduced latencies based on proximity.

Globalization Is Supported Without Latency Increase

Globalized content is often localized based on geographic positioning, which can further muddy the waters of API design and increase processing potential pitfalls. For example, if an API localizes content only through back-end logic after a user request, it could take longer to process and avoid using caches.

Low-latency content APIs include localization as part of the content model so that the information retrieval process does not bog down performance. Localization does not have to come from additional computation; in fact, if it’s integrated into the definition of what’s presented to a given audience, there need not be decreased caching throughput or increased payload complexity.

Thus, low-latency content APIs allow organizations to maintain international market relevance without sacrificing response times across any demographics.

APIs Designed for Low Latency Should Be Monitored and Adjusted for Performance Over Time

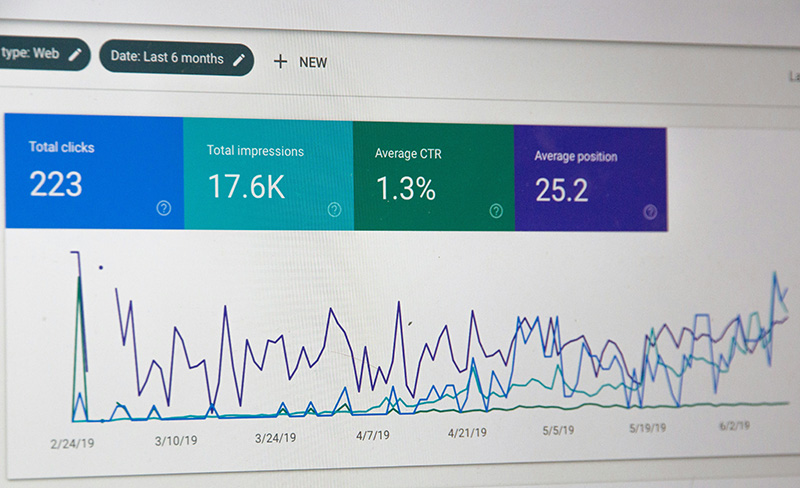

APIs designed for low-latency content are not a one-and-done. User patterns evolve, areas are added, the sheer amount of content changes. As volume and patterns shift, latency can become problematic even if it wasn’t an issue before. Monitoring is crucial to keep users engaged; what’s more, it gives insight into how/when users engage.

Performance gives insight into latency challenges. By investigating response times by region, cache hit counts, error rates, etc., one can glean the state of the API. This data can be useful for adjustments such as refining endpoints, changing cache strategies, and even model adjustments. When taking on the responsibility for low-latency performance, it remains feasible over time, even as things become more complicated. In an international landscape, one must pivot just as easily as they design.

Facilitating Content APIs Is a Challenge Between Performance and Flexibility

One of the greatest struggles in designing content APIs is finding the balance between performance and flexibility. Flexible APIs can accommodate various use cases; however, their responses are less predictable and slower. To create a low-latency API, one must operate with intentional restrictions that focus on effective application of content without crippling creative thought.

This is supplemented through documentation, intentionally crafted endpoints, and logical defaults that favor performance without limiting potential appeal. Flexibility exists at the content level while API functionality remains predictable and powerful. In time, this creates effective and rapid APIs that facilitate any number of products across the world without compromising the user experience. In an international landscape, flexible applications that focus on discipline are what support such low-latency longevity.

Stateless API Design to Minimize Global Latency

A low-latency content API should be stateless. Statelessness is a key component of low-latency content API design for global audiences. Stateless APIs mean no server-side session state is required for processing. Therefore, each request can be fulfilled as if it’s coming from a separate instance, minimizing processing time as requests can be routed to the closest applicable infrastructure and there’s no session look-up or need to synchronize across geographies. In addition, because horizontal scaling is more readily available with vertical resources, as traffic can be sent to wherever it needs to go without latency spikes, demand surges can be managed more easily without sacrificing load times for users.

From a content API design perspective, such an implementation encourages cleaner boundaries between what clients send and what the API cares about. For example, with statelessness, everything required is sent with each request, making information needs predictable and cacheable (a powerful tool for global delivery and at-the-edge instances). Therefore, operating within the confines of the stateless design concept leads to less responsive data, faster load times, and more effective data requests across a distributed architecture.

Payload Reduction to Minimize Imposed Latency

Payload size is perhaps one of the more obvious reasons for latency, but users underestimate its impact. Imagine a user on a mobile device or a subpar WiFi connection. Even the smallest delayed transfer can render a user experience unacceptable. Low-latency content APIs aim to avoid this by integrating strategies that reduce payload size without sacrificing content richness. API providers should not rely on generic responses or nesting levels, but rather on requesters’ intentions to provide reduced fields, eliminate excess lookups, and limit request/response trees that ultimately bog things down but don’t have to.

Reducing payload size without reducing content means relying upon composite quality instead of hierarchal dimensions. By using common smaller responses and re-purposing those at the client-side for ultimate, rich experiences, low-latency content APIs can avoid large responses while still delivering what client-side options ultimately need. Over time, decreased responsiveness can be reciprocated by reduced payload requests translating into reduced bandwidth costs geographically as new options become available and content libraries expand.

Predictable Endpoints Increase Cache Effectiveness

Low-latency operations benefit from predictability. APIs that leverage predictable endpoints and response structures lend themselves to caching worldwide in a far more effective manner. When the same request yields the same response, it can be cached for future reuse more effectively. Requesters can receive their requests nearly instantaneously if the first leg of data acquisition does not have to be repeated.

Thus, creating a predictable environment minimizes unnecessary changes to anticipated API behavior. Query parameters can be established, defaults can be accepted, and offering response elements that change dynamically and create cache invalidation for no legitimate reason should be avoided. This limits unnecessary loads on the origin and renders performance more stable in the various regions. Where global operations exist, predictability plays not only a technical concern but a performance advantage for cache effectiveness.

Accountability for Global Traffic Spikes Without Latency Impact

Global content delivery does not always occur at the same time and place for international users; traffic can spike, whether due to global launches or shifts between time zones during the day. When low-latency content APIs are in play, they must be able to absorb variability without degrading performance.

They need to be designed with uneven loads in mind, where performance complements infrastructure and caching to achieve the greatest benefit. Stateless, cached approaches, combined with geo-distributed delivery endpoints, create solutions that handle load with minimal latency impact. Should one region boast an inordinate amount of requests, they need not have to travel to a centralized source; a geo-distributed solution should suffice. When APIs are designed to degrade gracefully with acceptable response times under load, they can better accommodate global access, irrespective of regional spikes.

submitted post